In this research track we try to optimize simulation scalability capabilities. Especially of agent-based simulation applications. Simulation models are gaining more importance in industry applications thanks to the rise of Digital Twins and Internet-of-Things environments. Such environments can not only be used to monitor real-time data, but also to simulate future scenarios. The large amount of interconnected entities in such environments makes scalability of these simulations a challenge. For example, to optimize traffic flows by automatically controlling traffic lights, an enormous amount of simulated vehicles is required. This scale is often infeasible to state-of-the-art micro-level traffic simulation frameworks. We try to tackle this challenge by dynamically improving the scalability. We can do this by partitioning simulations over multiple compute units. We then need to keep track of the simulation load as it may vary over these compute units to maintain the computational balance. Furthermore, we can dynamically reduce the complexity of the simulation models when higher or lower levels of accuracy are required.

A second approach we use to tackle large-scale testing, is hybrid simulation. Hybrid simulation extends a physical testbed with a virtual environment. Digital twins are used to represent the physical assets in the virtual environment. The sensor data of the physical assets, on the other hand, is modified to represent the virtual assets (e.g., drawing the virtual object in the camera view of the physical asset). This allows us to scale up physical testbeds, but also allows us to validate complex scenarios between interacting intelligent agents (e.g., obstacle avoidance) without the risk encountered in physical tests.

Finally, closely related to hybrid simulation is our research on distributed digital twins. We research digital twin architectures, as well as methodologies to share local observations with other nearby assets in a data efficient way by taking into account data relevance and data quality parameters.

Research examples

- Dynamic resource distribution: during simulation runtime clusters of higher computational load may dynamically form. These clusters form computational bottlenecks in the context of parallel and distributed simulations. We are developing a framework to detect these bottlenecks and resolving them at run-time by redistributing the environment to maintain the computational balance.

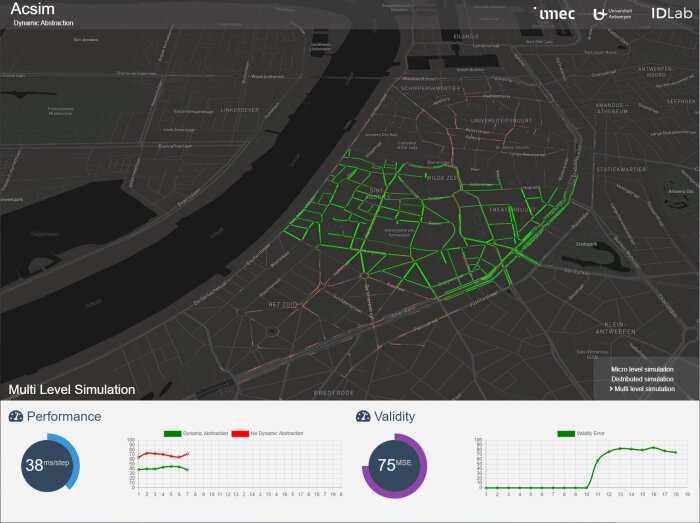

- Dynamic Model Abstraction: Not all simulation areas require the same level of detail. This often depends on the application requirements. Based on these requirements we are developing a method to dynamically switch between various levels of model accuracy of certain simulation regions in order to lower the computational resources when possible. Furthermore, we investigate the use of novel data-driven models that can be used as an alternative to explicit models.

- Hybrid simulation: Large Internet of Things environments are extremely difficult to test in real world lab scenarios due to their scale. Testing applications involving cyber-physical systems such as vehicles, ships and robots, on the other hand, is challenging due to the risks of damage or injury. For both cases, we could deploy the setup in fully simulated environments. However, when we do so, we lose the link with reality; the environment might be too complex to fully capture in simulation, noise could be present on sensors in the IoT network, … Therefore, we provide a link with a real-life environment using distributed digital twin technology, augmented reality and sensor simulation.

-

With hybrid simulation we connect physical test beds with virtual agents, to virtually scale-up physical testing environments. This allows validation of complex interactions between agents in a safe way.

With hybrid simulation we connect physical test beds with virtual agents, to virtually scale-up physical testing environments. This allows validation of complex interactions between agents in a safe way. -

IDLab’s ACSIM simulation framework enables large-scale simulation of emergen behavior through novel adaptive load balancing and adaptive abstraction.

IDLab’s ACSIM simulation framework enables large-scale simulation of emergen behavior through novel adaptive load balancing and adaptive abstraction.

Involved faculty

Siegfried Mercelis