In recent years, Artificial Intelligence systems have matured significantly to the point of matching human performance in very specific tasks. However, despite their good performance, their complexity may hinder the features that they encode internally, or what information they take into account when reaching a specific decision.

Our research focuses on designing algorithms to provide existing systems with capabilities to interpret their internals and explain their decisions. In addition, we focus on interpretable-by-design algorithms that exploit causal relationships and disentangled representations.

Research examples

- Causal discovery: capture and visualise the cause-effect relationship between features. While traditional machine learning generates predictions from associations between the input and the output variables, causal machine learning goes further by determining the causal relationships among the variables. The result is a better generalisation and understanding of the underlying mechanism. Furthermore, given a causal model, it is possible to extract the details on how a certain prediction was made (by following the causal links), as well as variant methods to change the prediction.

- Post-hoc model interpretation algorithms that identify relationships between internal states or actications of given model and the predictions it makes. This allows to obtain insights on features internally encoded by a model. Moreover, recent research at IDLab suggests that this could assist the detection of adversarial samples.

- Interpretable-by-design algorithms capable of highlighting and disentangling relevant features that are critical for making accurate predictions.

-

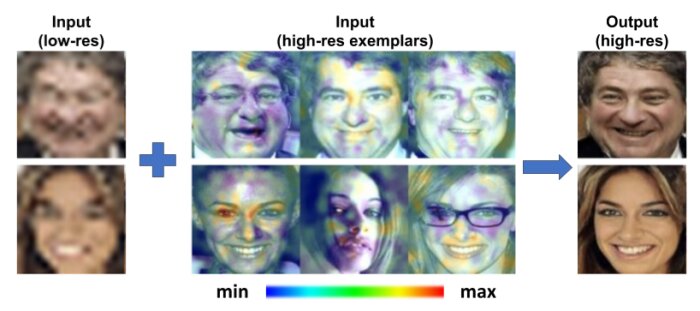

Image super-resolution based on multiple exemplars. The proposed method learns how to select regions (highlighted) from the exemplars to guide the super resolution process.

Image super-resolution based on multiple exemplars. The proposed method learns how to select regions (highlighted) from the exemplars to guide the super resolution process.

-

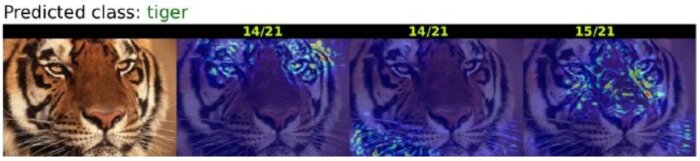

Our algorithm enriches the prediction (“tiger”) made by a base model with heatmaps highlighting different features of the input that determined the prediction.

Our algorithm enriches the prediction (“tiger”) made by a base model with heatmaps highlighting different features of the input that determined the prediction.